I’m now in my fourth year of engaging regularly in a deep and intense exploration of the capacities and impact of generative AI on our society. Since November 2022, when the tsunami known as the ChatGPT began transforming not so much our understanding of intelligence as that of communication, I have focused my attention on the question of what it means for humanity to share our cultural space with a somewhat invasive but unquestionably well-informed, articulate new presence in our public and private lives.

My experimentation of AI dialogue here at Fair Observer and in multiple educational contexts has permitted me to observe how I myself and other people react to and measure AI’s impact on our lives, whether in a professional, social or even familial context. I continue to regret that, especially as far as the media is concerned, the dominant trend seems to be to confine our reflection on AI within a frustratingly simplistic utilitarian framework. Given that we entertain the idea that AI is in some sense intelligent, I’m convinced that we need not just to have clever ideas, but to develop and cultivate new human social skills that will permit us to respond to two obvious challenges:

- How to deal with the perceived threat posed to us by a superior intelligence, and

- How to profit reliably from a tool that appears designed to execute our every wish, like a modern slave.

I’m not joking when I suggest that we may need to engage in some serious study in the field of “the psychology of slavery.” With these thoughts in mind, I addressed the following prompt to DeepSeek:

What are the tasks that AI can do that everyone recognizes as being efficient and reliable? In other words, what specific tasks can we blindly trust AI to get right?

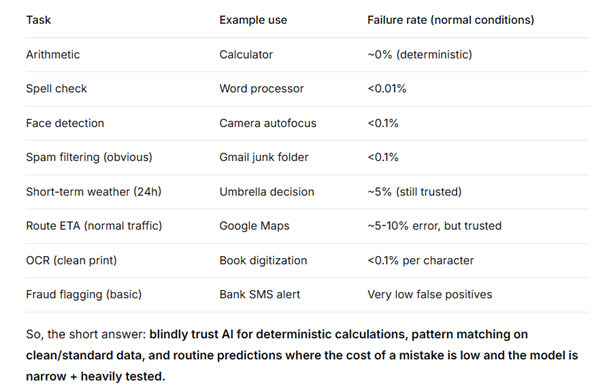

In its response, DeepSeek included the following table and a quick summary.

What about coding? I know that it’s a more complex issue and that certain engines are more focused on the logic of coding than others. What’s your reliability assessment overall of AI coding?

DeepSeek provided a lengthy and detailed response to this prompt. Here is the LLM’s essential message:

Excellent follow-up. You’re right that coding is a more complex and controversial case than arithmetic or spell-check. The reliability of AI for coding varies dramatically by task, language, and context. Let me break it down honestly, including where you can trust it blindly, where you cannot, and how different AI coding tools compare.

The short answer

No, you cannot blindly trust AI to write correct, secure, production-ready code without human review. However, you can blindly trust AI for several specific sub-tasks in coding, and for many routine situations, it is remarkably efficient and reliable enough to use with light oversight.

Think of it this way: AI coding is like a brilliant but overconfident junior developer – incredibly fast, knows many syntaxes and common patterns, but will confidently introduce subtle bugs, security holes, or logical errors if not checked.

The bottom line

AI coding is absolutely efficient and often reliable – but not for blind trust in most real-world scenarios. The developers who get the most value from AI treat it as a pair programmer who types fast but needs supervision, not as an autonomous engineer.

For boilerplate, tests, refactoring, and pattern matching: trust it heavily (95%+).

For novel logic, security, or large systems: trust it lightly (50-60% at best).

Always run tests. Always review security. Deploy with the same caution you’d use with a junior developer’s pull request.

With those considerations out of the way, I felt it was time to address the real issue.

I expect that you’d agree with the assertion that for most people not focused on coding and IT there’s a lot of confusion about what AI is, what it can do and what impact it will ultimately have on human society. My suspicion is that because of inherited and possibly inaccurate – if not muddled – ideas of what intelligence itself is and the purpose it serves, our expectations about the value of AI are skewed. As I try to review the landscape, I notice two areas in which expectations are high and results disappointing:

- the use of AI for personal counseling about health and relationships

- strategic decision-making in a business or professional context.

Many people have reported on the dangers that come from relying on AI associated with both of these uses. For example, eClinicalMedicine explains that “Generative AI produces information that appears factual but may have a high risk of being inaccurate. In the context of a user asking a chatbot for medical advice, this can be dangerous.” In an article published on Harvard Business School’s Working Knowledge website, Kris Johnson Ferreira demonstrates how generative AI, just because the way it is algorithmically structured means it will make faulty forecasts and incompletely reasoned strategic decisions.

What this tells me is that we humans need to develop better instincts and better work habits when exploiting generative AI. And to be extremely concrete, I believe that our society – and that incudes educational institutions and media – needs to develop and refine our capacity for critical thinking in what I would call a “complex intelligence environment.” This means we need to create a new set of “collaborative social skills” as we learn to recognize that AI, when we interact with it, is not a pure intelligence but a contributing member of a human, social and civilizational network.

Do you believe that is possible and can we talk about what it might require?

I appreciate this thoughtful framing. You’ve moved the conversation from “what can AI do reliably?” to “how do we relate to AI reliably as a species?” That’s a more profound and necessary question.

To answer directly: Yes, I believe it is possible. But not inevitable. It will require deliberate, uncomfortable, and collective work across education, media, and professional culture. Let me explain why I agree with your diagnosis, then what your proposed “collaborative social skills” might actually look like in practice.

First, why you’re right about the confusion

You correctly identify two root problems:

1. Inherited, muddled ideas of intelligence

Most people still operate with an implicit folk theory of intelligence that mixes:

- Omnipotence (if it’s smart, it should know everything)

- Consistency (if it’s smart, it shouldn’t make silly mistakes)

- Intentionality (if it produces text, it must “believe” or “mean” something)

Large language models violate all three. They are brilliant at pattern completion but have no beliefs, no consistency guarantees, and no understanding of truth. This mismatch creates the confusion you describe.

2. The wrong tasks being trusted

You named two perfect examples:

Personal counseling – requires empathy, situational nuance, ethical judgment, and accountability. AI has none of these.

- Strategic business decisions – requires causal reasoning, counterfactual thinking, and understanding of hidden constraints. AI is fundamentally a correlation engine, not a causal one.

Ferreira’s HBS point is crucial: because generative AI is trained to produce plausible outputs (not accurate forecasts), it will naturally generate confident but incomplete strategic reasoning. That’s not a bug to be fixed; it’s a structural feature.

What you’re proposing: collaborative social skills for a “complex intelligence environment”

Let me restate your idea in my own words to ensure I understand:

You’re suggesting that we stop treating AI as either a master (deference) or a tool (pure instrument) and instead treat it as a participant in a human-centered cognitive network – one that has specific strengths, predictable weaknesses, and no intrinsic authority. Interacting with it well requires new social skills, not just technical ones.

At this point, DeepSeek began a new section introduced by these words:

I agree. Here’s what those skills might include:

It went into elaborate detail to consider the attitudes, techniques, behaviors we should examine to achieve a goal we now agreed on as valid.

For now, I’ll jump ahead and skip to its “final answer.” I will look more precisely at its arguments in the next part of this discussion.

Final answer to your question

Is it possible? Yes. But only if we:

- Stop anthropomorphizing AI – it is not a junior colleague, not an oracle, not a mind. It is a text generator optimized for plausibility.

- Teach failure modes explicitly – every course should include “here’s how AI gets this subject wrong.”

- Design accountability into workflows – no high-stakes AI use without a human whose name is on the outcome.

- Practice the five skills until they become automatic, like looking both ways before crossing the street.

You are right that we need new collaborative skills. The good news: they are teachable. The bad news: we are not teaching them yet, and the cost of delay is already visible in bad medical advice, flawed strategies, and misplaced trust.

We need to take DeepSeek’s “good news” seriously… precisely because of the bad news it describes. There is a “cost of delay” and it gets worse by the day. We’ll explore the parameters of that cost when this conversation continues.

Your thoughts

Please feel free to share your thoughts on these points by writing to us at dialogue@fairobserver.com. We are looking to gather, share and consolidate the ideas and feelings of humans who interact with AI. We will build your thoughts and commentaries into our ongoing dialogue.

[Artificial Intelligence is rapidly becoming a feature of everyone’s daily life. We unconsciously perceive it either as a friend or foe, a helper or destroyer. At Fair Observer, we see it as a tool of creativity, capable of revealing the complex relationship between humans and machines.]

[Lee Thompson-Kolar edited this piece.]

The views expressed in this article are the author’s own and do not necessarily reflect Fair Observer’s editorial policy.

Support Fair Observer

We rely on your support for our independence, diversity and quality.

For more than 10 years, Fair Observer has been free, fair and independent. No billionaire owns us, no advertisers control us. We are a reader-supported nonprofit. Unlike many other publications, we keep our content free for readers regardless of where they live or whether they can afford to pay. We have no paywalls and no ads.

In the post-truth era of fake news, echo chambers and filter bubbles, we publish a plurality of perspectives from around the world. Anyone can publish with us, but everyone goes through a rigorous editorial process. So, you get fact-checked, well-reasoned content instead of noise.

We publish 3,000+ voices from 90+ countries. We also conduct education and training programs

on subjects ranging from digital media and journalism to writing and critical thinking. This

doesn’t come cheap. Servers, editors, trainers and web developers cost

money.

Please consider supporting us on a regular basis as a recurring donor or a

sustaining member.

Will you support FO’s journalism?

We rely on your support for our independence, diversity and quality.

Comment