International borders can be places of exclusion, violence and discrimination for those who do not qualify for the benefits of seamless international travel and mobility. Exclusionary factors can include race, ethnicity, national origin, gender identity, sex, prior travel history, protection needs, migration status and more. Now, the border has become a trial ground for invasive monitoring technologies. Algorithmic border governance (ABG) technologies affect almost every aspect of a person’s migration experience.

Recently, the Office of the United Nations High Commissioner for Human Rights discovered iris scans in refugee camps and artificial intelligence-driven lie detectors installed at international borders. Social media is being used to surveil refugees and citizens. Customs and Border Protection from the US Department of Homeland Security uses an AI tool called Babel X, which connects a person’s social security number to their location and social media. Robodogs, autonomous robots that can move on four or even two legs, are being deployed as force multipliers on the Mexico–US border. These are just a few of the unregulated, uncontrolled experimental initiatives that are quickly taking root. Technological advancement makes migration more nightmarish than ever before.

Frontex aerial surveillance highlights the life-saving potential of drones and aircraft, which can help those in maritime crises. Saving lives at sea ought to be the priority; a startling 25,313 people have perished in the Mediterranean since 2014. As it turns out, however, these deaths were caused by Frontex’s connivance, which “is in service of interceptions, not rescues.”

More than 7,000 international students may have been unjustly deported due to a flawed algorithm by the UK government. They were erroneously accused of cheating on English language exams, with no evidence provided against them.

How can human rights professionals improve the dignity of individuals crossing international borders? How can they expose the reality of this terrible situation? How do migrants oppose these experiments? This piece examines some of the profound effects of ABG technologies on human rights with a human rights-based approach (HRBA).

ABG militarization and border AI

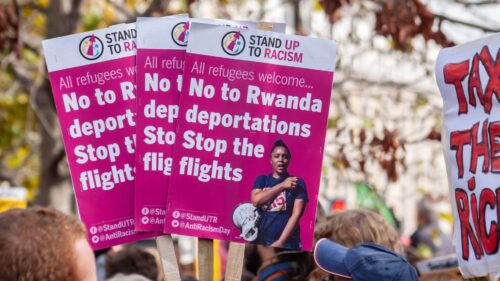

Racist and xenophobic sentiments against refugees, asylum seekers, migrants and stateless persons are increasing. These can be fuelled by the AI-driven militarization of borders and border governance. This involves tactics and policies that violate human rights, like pushbacks, extended immigration detention and refoulement. Border pushbacks are operations that prevent people from reaching, entering or remaining in a territory. Immigration detention is the practice of detaining migrants, especially those suspected of illegal entry, until immigration authorities can decide whether or not to let them through. Refoulement is the practice of deporting migrants, often refugees or asylum seekers, back to their country or another.

UN agencies provide a wealth of information about the grave injustice and threats to human rights that migrants face at international borders. Threatened rights include freedom of movement, prohibition against collective expulsion and refoulement, the right to seek asylum and many others. In these situations, borderless algorithmic technologies are used to further security goals. They highlight and create new avenues for human rights problems.

The goal of ABG must be to respect human rights. This strategy should be based on two main objectives. First, it should comprehend how poorly-planned algorithmic governance of border movement management strategies may result in unprotected human rights. Second, it should evaluate how newly-emerging technology may exacerbate pre-existing issues.

States use new algorithmic technology to identify individuals in transit near land, maritime and external borders, such as the European Union and the Schengen Zone. This technology includes ground sensors, surveillance towers, aerial systems, drones and video surveillance. AI has enabled tasks like movement detection and distinguishing between people and livestock. New ABG initiatives have repurposed technology for military or law enforcement applications, creating robodogs. States and regional bodies are using AI to forecast migration patterns, processing information from social media, Internet searches and cell phone data.

However, these efforts are primarily focused on stopping border crossings rather than assisting migrants. This has raised apprehensions among civil society groups, academia and international agencies. When used in a securitized approach to border regulation, these AI technologies could potentially violate human rights, like the right to asylum or the ability to leave one’s country of origin. The UN Working Group suggests that drones for maritime surveillance can help detect and maintain a safe distance from search and rescue activities, allowing migrants to reach secure harbors.

In 2021, the UN Special Rapporteur on the human rights of migrants released a study highlighting the use of pushbacks as a form of punishment, deterrent or targeting system. Migrants face significant danger at borders due to pushback policies, tactics, physical barriers and advanced monitoring technology. EU-funded pilot projects like iBorder Control focus on automated deception detection systems, face-matching tools, biometrics and document authentication apparatus. The Trespass program offers real-time behavior analytics that could uncover hidden intent through on-site observations and open-source mining.

Internalized borders and algorithmic risk assessments

As part of a goal to internalize borders, some states are attempting to identify individuals with irregular status through transformative digital technologies. This can happen years after the individual’s initial entry into the nation. Investigative journalists show that some immigration enforcement agencies have accessed databases of other state institutions, which are typically protected from law enforcement by firewalls. These agencies have attempted to identify people with irregular immigration statuses, putting them in danger of deportation or incarceration.

Certain states allegedly utilize data brokers to obtain information about things major and minor: prior employment, marriages, bank and property records, vehicle registrations, even phone subscriptions and cable television bills. Academics and civil society organizations have demonstrated the chilling impact that digital border technology may have on individuals exercising their rights. These include rights to housing, healthcare and education. If they are discovered, migrants may face severe repercussions.

According to reports, many migrants abstain from using record-keeping services that are essential to their family’s wellbeing, including child welfare, healthcare and legal systems. They avoid these out of concern that law enforcement may access their information and use it to detain, prosecute and deport them.

Algorithmic risk assessments are used in border administration, such as assigning higher risk classifications to visa applications and referring them to human decision-makers. These assessments are also used in states to decide whether to detain migrants. Concerns about human rights arise when AI assessments are applied in detention decisions.

Algorithms need large datasets to train. They may contain biased and discriminatory information due to overrepresentation or underrepresentation of certain groups, particularly the categories of gender, race and ethnicity. The algorithm’s weighting of input data and the results it generates also contribute to algorithmic discrimination. Researchers in the US have found that some algorithms may lean toward high-risk classifications in detention decisions, potentially leading to the detention of low-risk migrants. This is because algorithms’ apparent impartiality and scientific character may corroborate human officers’ prejudices, which can lead to discrimination against certain groups and stereotypes.

States may use technology like voice and facial recognition reporting software, digital ankle shackles and electronic monitoring to substitute traditional incarceration methods. However, the UN Committee on the Protection of the Rights of All Migrant Workers and Members of their Families notes that these automated actions may have unfortunate consequences. They could further stigmatize migrants, lead to burdensome requirements, cause detentions and prompt a growth of algorithmic detention regimes. Specific methods may impede people’s freedom of movement and enhance monitoring, even if they are not considered confinement.

Role data and the future

AI technologies and those employed generally in the ABG context rely heavily on data. Input data is entered into them directly, and additional data is produced as a byproduct of its deployment. The data types many states store and use include fingerprints and facial images obtained for visas and travel authorization; data from social media accounts; automated border control technologies like e-gates and smart tunnels; monitoring health data; educational records and employment status. Commercial corporations, international organizations and other states too may gather shared data.

The EU Artificial Intelligence Act to Regulate Artificial Intelligence aims to exclude current interoperable databases on criminal records, immigration and asylum from the usual safeguards offered for high-risk AI applications. Access to these facilitates merging immigration databases with data gathered for criminal proceedings. This raises several potential human rights risks, like violations of the rights to equality, privacy and freedom from discrimination. Rights to life, liberty and security are in jeopardy as well if indiscriminate data sharing leads to detention and deportation.

There are few formal regulations governing the design and deployment of digital technologies used at borders. AI is broadly unregulated as well. Despite this, the use of ABG technologies does not occur in a regulatory vacuum. States must uphold international human rights law. Governments and businesses must abide by the UN Guiding Principles on Business and Human Rights.

However, when using digital border technology, noncompliance with these duties creates protection gaps. Firsthand accounts of those impacted by ABG technologies must be prioritized when implementing an HRBA framework for migration and ABG technology regulation. There need to be discussions between affected communities and policymakers, academics, technologists and civil society about the risks of using new technologies that protect human rights. Mobile communities should continue to have conversations about creating and using digital border technologies — before their deployment, not after.

[Lee Thompson-Kolar edited this piece.]

The views expressed in this article are the author’s own and do not necessarily reflect Fair Observer’s editorial policy.

Support Fair Observer

We rely on your support for our independence, diversity and quality.

For more than 10 years, Fair Observer has been free, fair and independent. No billionaire owns us, no advertisers control us. We are a reader-supported nonprofit. Unlike many other publications, we keep our content free for readers regardless of where they live or whether they can afford to pay. We have no paywalls and no ads.

In the post-truth era of fake news, echo chambers and filter bubbles, we publish a plurality of perspectives from around the world. Anyone can publish with us, but everyone goes through a rigorous editorial process. So, you get fact-checked, well-reasoned content instead of noise.

We publish 3,000+ voices from 90+ countries. We also conduct education and training programs

on subjects ranging from digital media and journalism to writing and critical thinking. This

doesn’t come cheap. Servers, editors, trainers and web developers cost

money.

Please consider supporting us on a regular basis as a recurring donor or a

sustaining member.

Will you support FO’s journalism?

We rely on your support for our independence, diversity and quality.

Comment