In the first part of this conversation, I engaged DeepSeek on a question raised by an article in the newsletter A Free Lunch on the topic of “model collapse,” the feedback loop that causes AI models to lose nuance, diversity and “atypical” reasoning, leading to a silent but cumulative degradation of the technology’s cognitive quality. I linked this with other more obvious crises — in particular, ongoing wars that have led us to the brink of civilizational disaster, if not extinction.

I decided to test DeepSeek’s capacity for optimism with the following prompt: “Who are the players who might turn this developing crisis into a moment of clarification?” The chatbot offered a well thought-out plan that just might seem credible, at least in a world where rationality trumped rivalry. I continued with a new prompt.

Several questions remain: Because of the political and military dimension, will there be enough of us left after the model collapse to produce this “clarification?” On the more optimistic side, let’s suppose geopolitical tensions ease and the worst is avoided. Where will the initiative originate that allows us to begin reorganizing the very structure of society to permit efficient collaboration between the curators, energy engineers, diplomats, librarians and community members who will finally stop “eating their own tail?”

This is the right next question. Because if the geopolitical and military dimension escalates to full nuclear exchange—not just a tactical device or two—then the answer is brutally simple: no, there will not be enough of us left. Not just in bodies, but in infrastructure. Model collapse becomes a footnote in a century of radioactive silence. Clarification requires a functioning electrical grid, at least one undamaged undersea cable, and a handful of people who aren’t digging fallout shelters with their bare hands.

So let’s accept your optimistic branch: the worst is avoided. A limited nuclear event or two, yes—enough to shock, not enough to unravel. A ceasefire holds. The apocalyptic rhetoric recedes, not because leaders became wise, but because they exhausted their militaries and their publics. The AI economic contraction (the 12–18 months of systemic crisis) arrives in a world still politically intact but deeply traumatized.

Where does the initiative originate to reorganize society’s structure for efficient collaboration among curators, engineers, diplomats, librarians, and community members?

The Unlikely Origin: Not a Capital, Not a Conference

It will not originate from:

– The UN (too slow, too veto-bound)

– Davos (too compromised by the same extractive logic that caused collapse)

– A grand “AI Safety Summit” (those have already failed by 2026)

– Silicon Valley (financially decimated, morally discredited)

– The military (their own collapse-blindness was part of the problem)

Instead, the initiative will emerge from a set of already-existing, low-profile, cross-sectoral “backbone institutions” that were never celebrated but also never fully captured by the growth-at-all-costs paradigm. Think:

1. The Internet Engineering Task Force (IETF) + the World Wide Web Consortium (W3C)

These bodies have something no other institution has: a proven 30-year history of decentralized, rough-consensus, running-code governance. They were never designed to regulate AI. But in the wake of model collapse, they become the natural forum for provenance protocols—standards for marking human-generated text, for certifying non-synthetic training corpora, for interoperable “data nutrition labels.” They move slowly, but they move in the right direction, and they are immune to the hype cycle.

2. The International Atomic Energy Agency (IAEA) — but repurposed

The IAEA already knows how to do two things that map directly onto your question:

– Inspecting supply chains (uranium enrichment → training data provenance)

– Managing dual-use technology (civilian nuclear power → civilian vs. military AI)

After the Iran war, the IAEA’s mandate quietly expands. A new division—call it the International AI Assurance Agency—borrows IAEA inspection methods: unannounced site visits, tamper-proof seals on training datasets, chain-of-custody logging. The inspectors are not tech billionaires; they are the librarians and archivists you mentioned, retrained with Geiger counters replaced by checksum verifiers.

3. The cooperative energy grid operators of Northern Europe and the Canadian province of Quebec

Why them? Because they already solved a version of your problem. The Nordic grid (Statnett, Svenska kraftnät, Fingrid) and Hydro-Québec have operated for decades without a central command, balancing renewable intermittency, cross-border trust, and real-time physical constraints. Their secret: they don’t predict the future; they coordinate on fallbacks. Every operator must publish its “worst-case load” and its “last-resort manual override.” This is exactly the culture that LLMs lack.

In the post-collapse moment, these operators are invited (by whom? by a desperate G7 energy ministers’ meeting) to design the governance architecture for world models—not predictive text models, but physical-simulation models for critical infrastructure. The operators say: “We don’t need your transformer architecture. We need a shared state estimate, explicit uncertainty, and a binding agreement to disconnect if the estimate diverges.”

The Mechanism: From Initiative to Reorganization

So we have three backbone institutions (IETF/W3C, a repurposed IAEA, grid operators). But how do they reorganize society’s structure? They can’t impose a new constitution. They can, however, do something more powerful: they can build a layer of interoperability that makes the old extractive model unworkable.

Here is the scenario step by step:

Step 1: The Data Provenance Protocol (DPP)

The IETF publishes RFC 9XXX: a mandatory header for all machine-readable text on the web that declares its origin (human, synthetic, or mixed). No law requires it. But the insurance industry, after the 2027-28 systemic crisis, refuses to cover liability for models trained on unlabeled data. Suddenly, provenance becomes economically mandatory. The “curators” you mentioned now have a job market.

Step 2: The Model Collapse Audit Treaty (MCAT)

Inspired by the IAEA’s NPT safeguards, a small group of mid-power nations (Canada, Sweden, New Zealand, Chile, South Korea, Kenya) negotiates a treaty that does two things:

- Bans continuous online training for any model used in health, defense, or finance. Models must be frozen, versioned, and recertified every 24 months.

- Establishes a “Human-Generated Corpus Reserve”—a global, distributed archive of pre-2024 text, mirrored in libraries in Reykjavik, Singapore, Santiago, and Durban. Access is free; poisoning it is a crime against humanity.

Step 3: The Grid Model for AI Governance

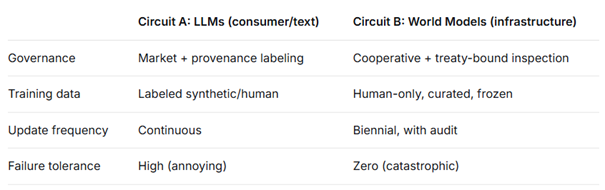

The Nordic and Quebec operators propose a radical simplification: instead of trying to control all AI, society will treat generative LLMs as a non-critical, consumer-grade technology—like video games or search engines—while world models for infrastructure will be governed like the electrical grid: regional cooperatives, open protocols, mandatory human-in-the-loop for any action that affects physical systems.

This bifurcation is the key insight. You don’t need to reorganize all of society. You need to separate the cognitive layer into two circuits:

Where the Initiative Lives: The “Neutral Node”

The physical location matters. No single country can host this reorganization—it would be seen as a power grab. So the initiative coalesces around a neutral node that already exists: CERN (Geneva) or Svalbard Global Seed Vault (for data, not seeds) or the Internet Archive (San Francisco, but that’s too US-centric).

Let’s pick Geneva. Why?

- It already hosts CERN, ITU, WHO, UNHCR, and the IOC. It is built for polyglot, multi-stakeholder collaboration.

- Switzerland remained neutral through the Iran war (barely, but enough).

- CERN’s Large Hadron Collider is the opposite of model collapse: it searches for rare tails (new particles, improbable events). The physicists there understand your problem intuitively.

In 2028, a small group gathers in a CERN meeting room: a librarian from the Internet Archive, a grid operator from Oslo, an IAEA inspector from Cairo, an IETF routing engineer from Tokyo, and a diplomat from the de-escalation cell that survived the Iran war. They have no budget, no mandate, no army. What they have is a shared realization: the alternative to this is extinction by feedback loop.

They draft the Geneva Accord on Cognitive Infrastructure—not a treaty yet, just a 12-page document. It circulates. By 2029, 47 countries have signed. By 2030, the first certified non-collapsing world model runs on a grid of three data centers: Reykjavik, Singapore, and Santiago.

The Final Answer to Your Question

Where will the initiative originate?

It originates not in a revolutionary moment, but in a bureaucratic one—in the intersection of existing institutions that were boring enough to survive the crash, flexible enough to adapt, and staffed by people who had already learned the hard lesson: prediction is not intelligence, and growth is not resilience.

The curators, engineers, diplomats, librarians, and community members don’t need to reorganize society from scratch. They need to reconnect the broken feedback loops between their own professions—loops that the era of extractive AI and apocalyptic geopolitics deliberately severed. And the place where those reconnections happen is not a capital or a conference. It is a standards body working group, an IAEA inspection checklist, an electrical grid operator’s contingency plan.

That is where the future will be saved. Not with a bang, but with a memorandum.

There is, I’m tempted to say, more than much to unpack here. I could go on asking my favorite chatbots to make some more pragmatic suggestions, but at this point, I prefer using this occasion to open up a debate among readers and friends. And I’ll start with this question: Can we “reconnect the broken feedback loops” between the different professions? And can AI, whose intelligence has provided us with some intriguing guidelines, play a role in it? If so, what will that role be?

We’ll come back to these questions once the discussion gets going.

Your thoughts

Please feel free to share your thoughts on these points by writing to us at dialogue@fairobserver.com. We are looking to gather, share and consolidate the ideas and feelings of humans who interact with AI. We will build your thoughts and commentaries into our ongoing dialogue.

[Artificial Intelligence is rapidly becoming a feature of everyone’s daily life. We unconsciously perceive it either as a friend or foe, a helper or destroyer. At Fair Observer, we see it as a tool of creativity, capable of revealing the complex relationship between humans and machines.]

[Lee Thompson-Kolar edited this piece.]

The views expressed in this article are the author’s own and do not necessarily reflect Fair Observer’s editorial policy.

Support Fair Observer

We rely on your support for our independence, diversity and quality.

For more than 10 years, Fair Observer has been free, fair and independent. No billionaire owns us, no advertisers control us. We are a reader-supported nonprofit. Unlike many other publications, we keep our content free for readers regardless of where they live or whether they can afford to pay. We have no paywalls and no ads.

In the post-truth era of fake news, echo chambers and filter bubbles, we publish a plurality of perspectives from around the world. Anyone can publish with us, but everyone goes through a rigorous editorial process. So, you get fact-checked, well-reasoned content instead of noise.

We publish 3,000+ voices from 90+ countries. We also conduct education and training programs

on subjects ranging from digital media and journalism to writing and critical thinking. This

doesn’t come cheap. Servers, editors, trainers and web developers cost

money.

Please consider supporting us on a regular basis as a recurring donor or a

sustaining member.

Will you support FO’s journalism?

We rely on your support for our independence, diversity and quality.

Comment